Back to All Projects

🧠 Federated Learning with Blockchain & IPFS

📌 Project Overview

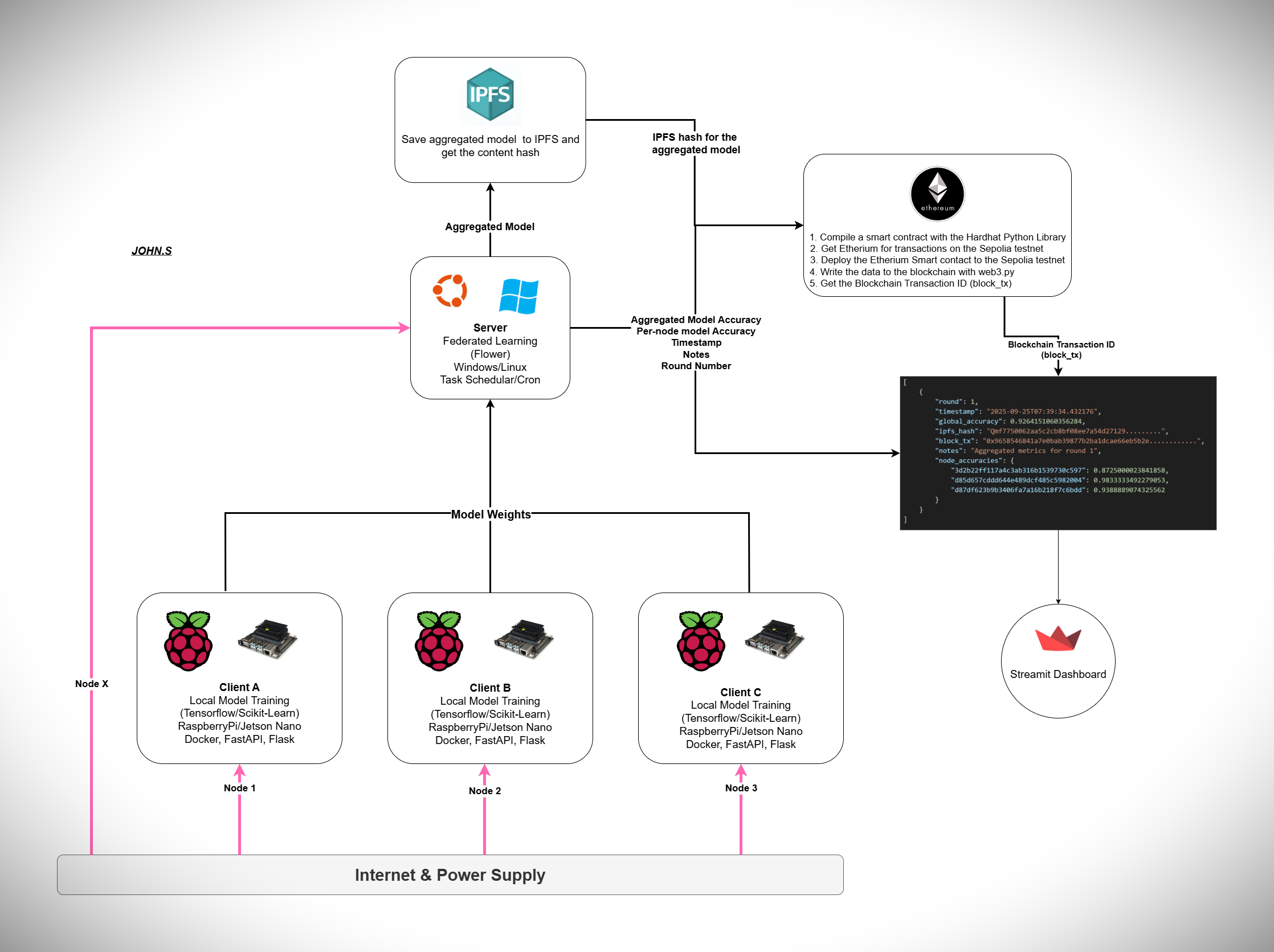

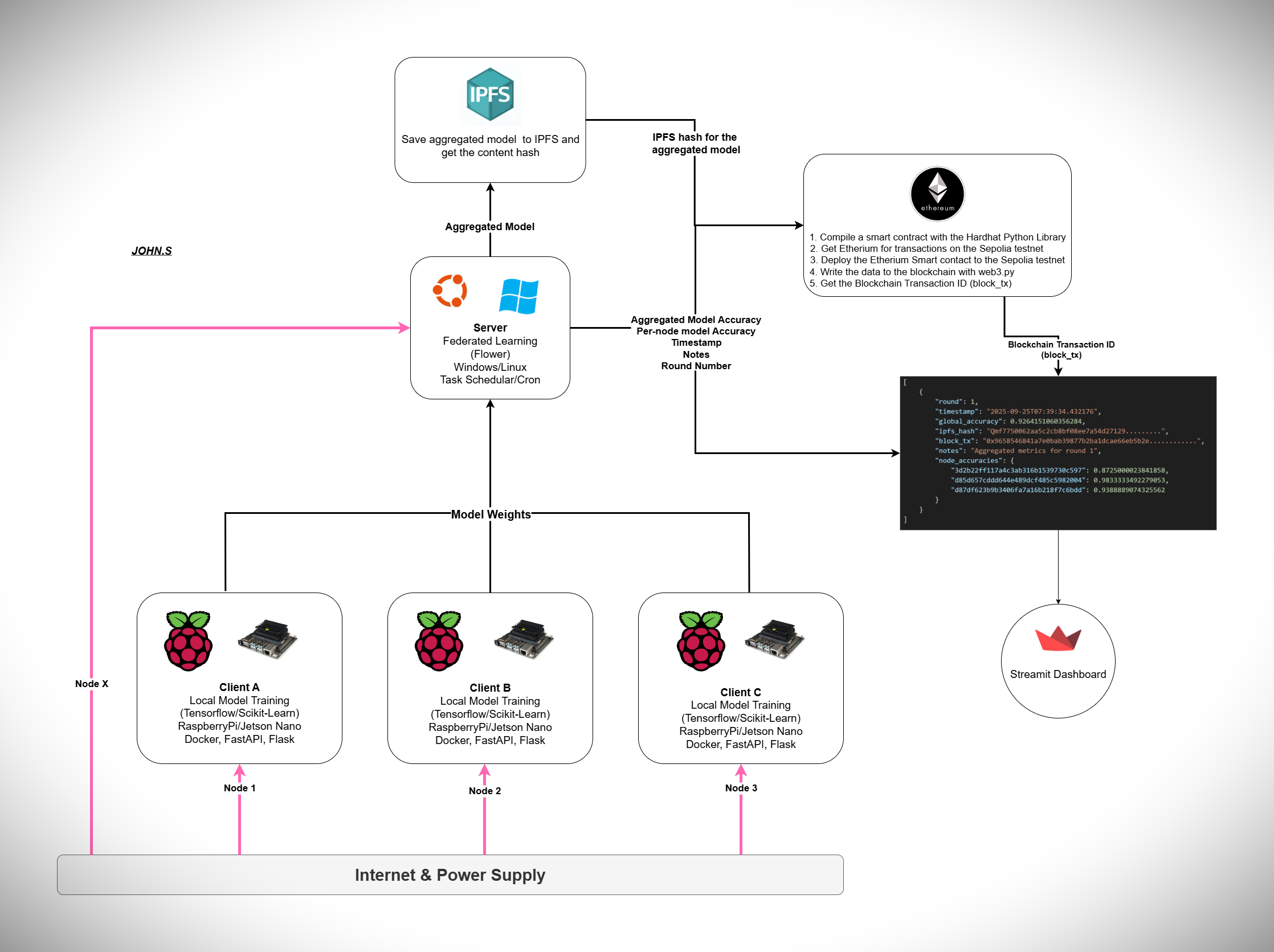

This project integrates Federated Learning (FL) with Blockchain and IPFS to create a decentralized, auditable, and transparent AI training ecosystem. The system ensures that local data from different clients (e.g., stores, hospitals, IoT devices) remains private, while model updates are aggregated securely on a central node. Each aggregation round is recorded on the Ethereum blockchain for transparency, and the aggregated global model is stored on IPFS for decentralized access.

⚙️ System Architecture

1. Clients (Local Nodes)

- Each client (Raspberry Pi or Jetson Nano device) trains a local ML/DL model using its private data.

- Frameworks used: TensorFlow, Scikit-learn, Docker, FastAPI, Flask.

- Example: Client A Prediction System (Store A)

2. Central Server (Aggregator Node)

- Runs Flower (FL framework) for model aggregation.

- OS: Windows/Linux.

- Automation via Task Scheduler / Cron jobs.

- Collects model weights, aggregates them, and produces a global model.

3. IPFS Storage

- The global model is saved to IPFS after each aggregation.

- An IPFS content hash is generated to ensure immutability and integrity.

4. Ethereum Blockchain Logging

- Each training round’s metadata (accuracy, timestamp, round number, IPFS hash) is recorded on the Ethereum Sepolia testnet.

- Example Transaction (Round 10): Sepolia Transaction on Etherscan

5. Dashboard & Visualization

- A Streamlit Dashboard visualizes metrics such as:

- Global accuracy trends

- Node-specific accuracy contributions

- Blockchain transaction logs

- URL: Federated System Dashboard

- 📊 Observation: Global accuracy improves with increased training rounds, showing the positive impact of collaboration.

📂 Data Flow Example

- Client A (Store A) submits input data to its prediction system.

- Local model predicts output and updates its parameters.

- Model weights are sent to the central aggregator (not raw data).

- Aggregator combines weights from all clients → generates a global model.

- Global model stored on IPFS + metadata logged to blockchain.

- Dashboard updates global accuracy & blockchain records.

- Improved model sent back to clients for further training → cycle continues.

🛠️ Frameworks & Tools Used

- Machine Learning: TensorFlow, Scikit-learn

- Federated Learning: Flower (FL framework)

- Web Apps (Client-side): FastAPI, Flask, Dockerized deployment

- Blockchain & Storage: Ethereum Sepolia testnet, Hardhat + Web3.py for smart contract & transactions, IPFS for decentralized model storage

- Dashboards: Streamlit (real-time monitoring)

- Hardware (Client Nodes): Raspberry Pi, NVIDIA Jetson Nano

🔗 Useful Links

Contact & Code Access

I am happy to discuss this project, answer any questions, or provide access to the source code for the client-server and blockchain components upon request. Please feel free to reach out to me.

Contact Me

📖 Documentation

🌟 Key Contributions of This System

- Data Privacy – Local training ensures raw data never leaves the client.

- Transparency – Blockchain records provide immutable proof of training rounds.

- Integrity – Models stored on IPFS with unique hashes for verification.

- Scalability – Supports multiple clients/nodes with lightweight devices.

- Improved Accuracy – Collaborative training boosts overall model performance.

Practical notes, risks and recommended practices

- Privacy: raw client data never leaves clients. Only model updates are transmitted.

- Availability: ensure IPFS pinning or cloud backup so model files remain retrievable.

- Key management: protect the private key used to sign blockchain transactions. Use environment secrets or hardware keys for production.

- Audit batching: to improve throughput, consider uploading and recording on-chain every N rounds (checkpointing) instead of every round.

- Monitoring: log times for local training, communication, aggregation, IPFS upload, and tx confirmation to measure rounds/minute and spot bottlenecks.

- Resilience: handle clients dropping out; strategy should tolerate missing updates and proceed when minimum clients respond.

Quick linear summary

- Clients train locally and produce weight updates.

- Clients send updates to the central aggregator.

- Aggregator performs FedAvg to create the global model.

- Aggregated model is saved to disk.

- Saved model is uploaded to IPFS → returns ipfs_hash.

- Aggregation metadata + ipfs_hash is recorded on the blockchain → returns block_tx.

- Ledger is updated and dashboard published.

- Clients fetch or receive the new global model → next round begins.